In operational technology environments, alert fatigue is not just a nuisance, it is an operational and security risk. When security systems generate hundreds of alerts daily, many of which point to normal engineering activity rather than genuine threats, analysts learn to filter by instinct rather than by analysis. Over time, that instinct develops blind spots. The real signals get buried.

This problem is more acute in OT monitoring than in enterprise IT for a simple reason: industrial environments have fundamentally different operating patterns, legacy communication behaviors, and process-driven maintenance cycles that most monitoring tools were not originally designed to understand. When IT-oriented detection logic is applied to OT traffic without adaptation, the false positive rate can be extraordinarily high, high enough to render the monitoring program operationally useless before it has been given a fair chance to prove its value.

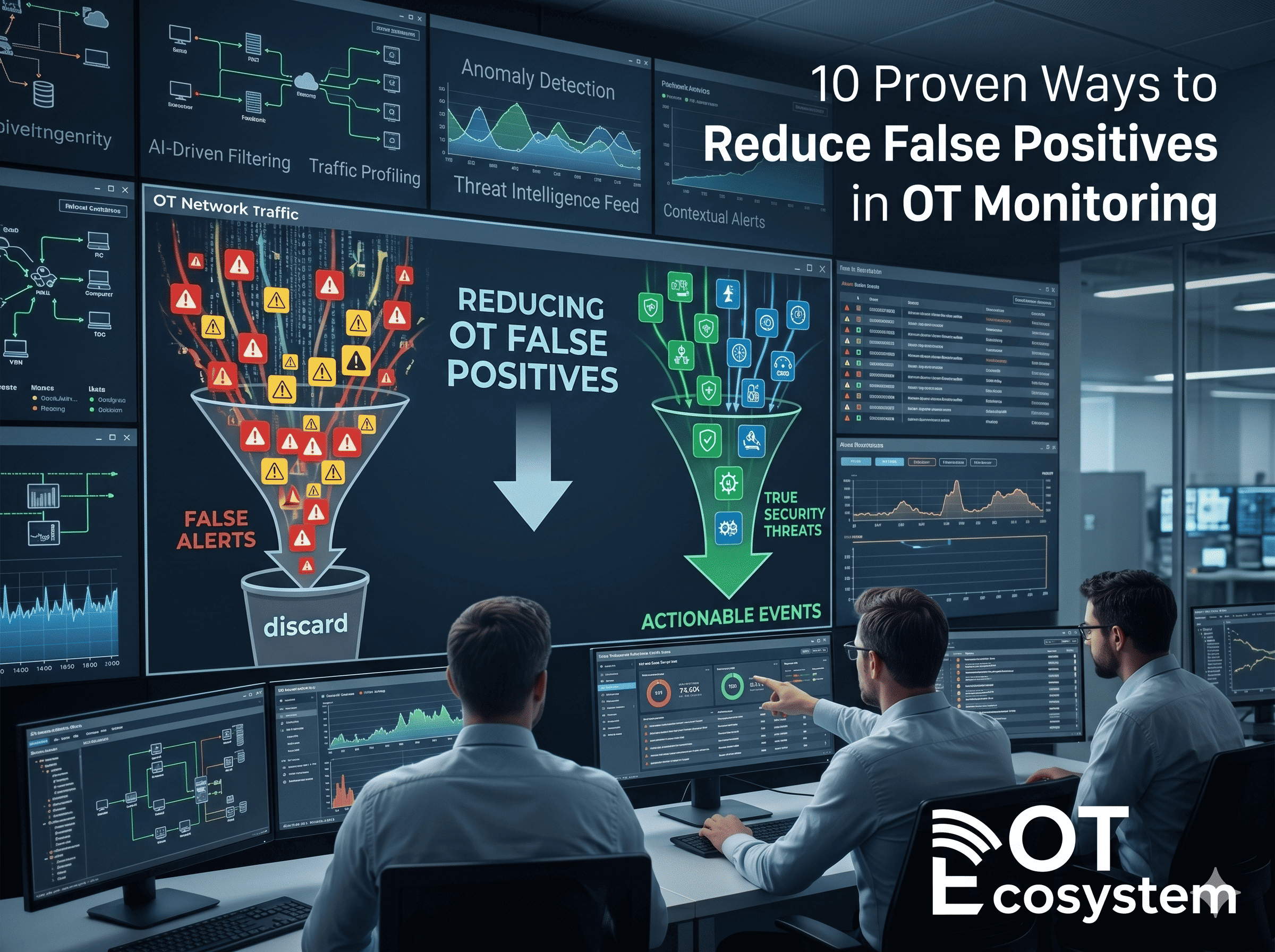

The 10 proven ways to reduce false positives in OT monitoring covered in this guide address this challenge systematically, providing security leaders, OT engineers, and SOC teams with practical, field-tested approaches to improving detection signal quality without sacrificing coverage or introducing new risk.

How OT Monitoring Has Changed, and Why It Remains Difficult to Get Right

The expansion of industrial network visibility over the past decade has been genuine and significant. Passive monitoring solutions designed for OT protocols are now widely available. CISA’s guidance on visibility and detection for critical infrastructure has matured substantially. IEC 62443 standards have provided architectural frameworks for segmentation and monitoring that support more effective detection programs.

What has not kept pace is the operational discipline required to make monitoring programs function effectively in practice. Many organizations have deployed monitoring tools without completing the foundational work that makes those tools accurate, comprehensive asset inventories, documented behavioral baselines, and clearly defined zone-by-zone communication expectations. The result is a monitoring environment that sees everything but understands nothing, generating alert volumes that overwhelm the analyst capacity available to process them.

The brownfield reality compounds this challenge. Most industrial environments contain a mix of modern and legacy systems, some of which communicate in undocumented ways, observe unusual polling cycles, or exhibit behaviors that were normal when they were installed decades ago but appear anomalous to contemporary monitoring tools. Tuning for these environments requires the kind of OT domain knowledge that pure cybersecurity backgrounds do not automatically provide.

1. Build a Complete OT Asset Inventory and Baseline Normal Behavior

What it is: A systematic discovery and documentation process that identifies every asset on the OT network, PLCs, RTUs, HMIs, historians, engineering workstations, and network infrastructure, and maps their normal communication patterns, polling cycles, and protocol behaviors.

Why it matters in OT: You cannot distinguish anomalous behavior from normal behavior without a credible record of what normal looks like. Many false positives in OT monitoring are generated simply because the monitoring tool has not been informed of legitimate communication relationships that have existed for years.

Operational value: Baseline documentation reduces alert noise immediately. When a historian polls a PLC on a defined 30-second cycle, a monitoring tool with that baseline does not generate a connection alert every 30 seconds, it alerts only when the cycle deviates significantly.

Implementation takeaway: Use passive network discovery tools to build the initial asset map without generating traffic that could disrupt sensitive legacy devices. Validate the discovered inventory against engineering documentation and physical inspection, then use the validated map as the foundation for detection rule development.

Example: A mid-sized water utility completed a three-month passive asset discovery exercise before deploying active monitoring. By the time their monitoring tool went live, it had a baseline of 847 documented communication relationships, reducing first-week alert volume by approximately 60 percent compared to a parallel site that deployed without baselining.

2. Tune Alerts by Zone, Process, and Criticality

What it is: A structured approach to alert configuration that applies different detection sensitivity thresholds based on the network zone, the process criticality, and the specific assets involved, rather than applying uniform rules across the entire OT environment.

Why it matters in OT: Not all OT assets carry equal operational or safety significance. A connection anomaly involving a safety instrumented system warrants immediate escalation. The same anomaly involving a batch reporting workstation warrants investigation at a different priority. Treating all alerts equally degrades both triage efficiency and detection credibility.

Operational value: Zone-and-criticality-based tuning reduces alert noise from low-risk environments while maintaining or increasing sensitivity in high-risk zones, improving signal quality without reducing coverage.

Implementation takeaway: Develop a zone criticality matrix that maps each network zone to a response tier. Configure detection thresholds and alert priorities based on zone classification, and review the classification at least annually as the process environment evolves.

Metric: Organizations that implement zone-based alert tiering typically report a 30 to 40 percent reduction in analyst triage time for high-priority alerts, as the signal-to-noise ratio in critical zones improves significantly.

3. Correlate Alerts With Maintenance Windows and Change Events

What it is: A process for automatically suppressing or contextualizing alerts that occur during documented planned maintenance windows, scheduled firmware updates, or approved network changes, preventing legitimate maintenance activity from generating actionable alerts.

Why it matters in OT: Industrial environments have regular maintenance cycles, scheduled shutdowns, quarterly PLC firmware updates, valve inspection routines, that generate predictable network activity deviations. Monitoring tools that are not aware of these events generate high-volume alerts for planned activity that no analyst needs to investigate.

Operational value: Maintenance window correlation eliminates a significant category of false positives that are particularly damaging to analyst confidence, because analysts know from experience that the alerts are not real threats, they begin applying the same dismissiveness to alerts outside maintenance windows.

Implementation takeaway: Integrate your monitoring platform with your change management system or, at minimum, establish a manual process for logging planned maintenance windows in the monitoring tool before they begin. Review and close any alerts generated within documented windows as part of the post-maintenance review.

Example: A manufacturing plant that integrated its Computerized Maintenance Management System (CMMS) with its OT monitoring platform reduced maintenance-related false positives by over 70 percent in the first quarter of integration.

4. Use Passive Monitoring and Protocol-Aware Analysis

What it is: Passive OT monitoring captures and analyzes network traffic without generating any traffic of its own, using protocol-specific parsers that understand Modbus, DNP3, EtherNet/IP, PROFINET, and other industrial communication standards.

Why it matters in OT: Active scanning tools designed for IT environments can destabilize legacy OT devices that were not designed to handle unexpected network traffic. Beyond the operational risk, active scanning frequently generates communications that appear anomalous to the monitoring system itself, creating circular false positive cycles.

Operational value: Passive monitoring provides comprehensive visibility without operational impact, and protocol-aware analysis dramatically reduces false positives from normal industrial communications that IT-oriented inspection tools misclassify as threats.

Implementation takeaway: Ensure your monitoring tools include parsers for the specific industrial protocols in use in your environment. Generic TCP/IP inspection without OT protocol understanding will consistently misinterpret normal industrial communications.

5. Eliminate Duplicate and Overlapping Detections

What it is: A systematic review and rationalization of detection rules to identify cases where multiple rules are firing on the same underlying event, generating redundant alerts that inflate volume without providing additional security value.

Why it matters in OT: As monitoring programs mature and evolve, detection rule sets frequently accumulate redundancies, particularly when rules have been added by different teams at different times without coordinated review. A single anomalous event may generate five alerts from five different rules that all detect the same behavior.

Operational value: Deduplication reduces alert volume immediately and improves triage efficiency by ensuring that each unique event generates one actionable alert rather than a cluster of redundant notifications.

Implementation takeaway: Conduct a detection rule audit at least twice annually. Map each rule to the specific behavior it detects, identify overlapping coverage, and consolidate redundant rules into a single, more precisely defined detection with appropriate enrichment.

6. Separate IT Security Rules from OT-Specific Detections

What it is: A deliberate architectural separation between detection logic designed for enterprise IT environments and detection logic specifically developed for OT network behaviors, protocol patterns, and industrial process contexts.

Why it matters in OT: IT security rules frequently flag normal OT behaviors as suspicious, large data bursts from polling systems, sequential connection patterns from engineering workstations, authentication-free communications that are normal for legacy industrial protocols. Applying IT logic to OT traffic is one of the most reliable ways to generate high false positive rates.

Operational value: OT-specific detection logic is calibrated to industrial reality, it knows that a PLC communicating without authentication is not necessarily compromised, that engineering workstations connecting to multiple PLCs in sequence is normal maintenance behavior, and that certain network anomalies only matter in specific operational contexts.

Implementation takeaway: Develop a separate detection rule library for OT environments, with each rule documented to explain what industrial behavior it is designed to detect and what legitimate similar behaviors it should not flag.

7. Use Allowlisting Carefully in Stable Environments

What it is: In stable, well-documented OT environments, allowlisting, defining which communications, connections, and behaviors are explicitly permitted and flagging everything else, can dramatically reduce false positive rates by inverting the detection logic from anomaly-based to deviation-from-known-good.

Why it matters in OT: Industrial environments often have genuinely static communication patterns. A SCADA system that communicates with a defined set of PLCs over defined protocols at defined intervals is a strong candidate for allowlist-based monitoring, because anything outside that known pattern is genuinely worth investigating.

Operational value: Allowlisting in appropriate environments reduces alert volume to near-zero for normal operations while maintaining high sensitivity to genuine deviations.

Implementation takeaway: Allowlisting requires a comprehensive, validated baseline before deployment. It works best in stable, well-understood environments and should be implemented cautiously in dynamic environments where legitimate communication patterns change frequently. Review and update allowlists whenever the process environment changes.

Example: A chemical processing facility implemented allowlist-based monitoring for its most stable process zone. In the first month, alert volume from that zone dropped by 85 percent while detection of an unauthorized engineering laptop connection, which would have been difficult to find in the previous alert noise, was immediate.

8. Apply Thresholding and Alert Enrichment to Reduce Noise

What it is: Thresholding requires an event to occur a defined number of times within a defined time window before generating an alert, preventing single transient events from creating actionable notifications. Enrichment adds operational context to alerts, asset criticality, zone classification, recent maintenance activity, historical alert frequency, that helps analysts triage more quickly.

Why it matters in OT: Single-instance anomalies in industrial environments are frequently transient, network packet loss, brief communication interruptions, engineering workstation restarts, that are not indicative of security incidents. Thresholding filters these transients out of the actionable alert queue.

Operational value: Enriched, thresholded alerts contain sufficient context for triage decisions at the point of alert generation, reducing the investigation time required before a response decision can be made.

Implementation takeaway: Define thresholds based on operational knowledge of your environment, not generic defaults. A threshold that is appropriate for a stable process zone may not be appropriate for a high-traffic historian connection.

9. Involve OT Engineers in Alert Validation and Rule Tuning

What it is: A structured collaboration process in which OT engineers and process specialists review and validate alert outputs, identifying false positives based on their operational knowledge of what is normal in specific areas of the plant.

Why it matters in OT: Cybersecurity analysts rarely have the process knowledge to determine whether a specific communication pattern in a process control network is genuinely anomalous or simply reflects a seasonal production change, a batch mode transition, or a scheduled calibration sequence. OT engineers do.

Operational value: Engineer-validated detection logic is significantly more accurate than logic developed by security teams in isolation. False positive rates drop when people who understand the process are involved in defining what the monitoring tool should and should not alert on.

Implementation takeaway: Establish a regular joint review cadence, monthly at minimum, that brings OT engineers and security analysts together to review recent alerts, validate or dismiss specific detections, and update detection logic based on operational feedback.

“The single most effective thing we did was put our process engineers and our security analysts in the same room to review alerts together. Within three months, our actionable alert rate had tripled.”, Placeholder: Manufacturing Security Lead

10. Review Detection Logic Regularly and Retire Low-Value Alerts

What it is: A periodic audit of all active detection rules, assessing each rule’s alert rate, true positive rate, and operational value, with a formal process for retiring or updating rules that consistently generate noise without producing actionable detections.

Why it matters in OT: Detection rule sets that are never reviewed grow stale. Rules written for systems that have been decommissioned continue to fire. Rules written for threats that no longer apply to the current environment consume analyst time without providing protection value.

Operational value: Regular rule retirement reduces overall alert volume, improves the signal quality of remaining alerts, and forces a periodic reassessment of whether the monitoring program is covering the risks that matter most in the current environment.

Implementation takeaway: Establish a quarterly detection review cycle. For each rule, track alert volume, confirmed true positive rate, and analyst disposition time. Rules that generate high volume with low confirmed positives are candidates for threshold adjustment or retirement. Rules that have not fired in six months warrant investigation, they may have been misconfigured or may apply to decommissioned assets.

Conclusion:

Reducing false positives in OT monitoring is not a configuration project that ends at go-live. It is a continuous operational discipline that improves the security program’s effectiveness over time, as the environment changes, as analysts learn more about the process, and as the threat landscape evolves.

The 10 proven ways to reduce false positives in OT monitoring in this guide provide a practical, phased framework for building the kind of detection program that OT security teams can sustain, trust, and continuously improve. Start with the foundational work, asset inventory, baseline documentation, and IT/OT rule separation, and build from there.

Stay Connected With OT Ecosystem

If you are a cybersecurity professional, OT engineer, SOC analyst, risk manager, or industry expert with hands-on experience in securing industrial environments, your insights can help organizations reduce risk, improve detection accuracy, and build more resilient operations. Practical, field-tested perspectives on OT monitoring, threat detection, and alert optimization are critical in today’s evolving threat landscape.

We invite contributors who can share actionable strategies, real-world use cases, and technical insights that go beyond theory. Whether you’re looking to publish your article on this platform or expand your reach across other leading platforms, we’d be glad to collaborate.

📩 Email: info@otecosystem.com

📞 Call: +91 9490056002

💬 WhatsApp: https://wa.me/919490056002